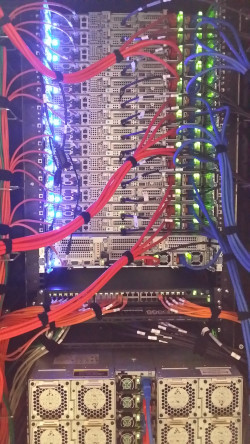

Hosted at the University of Namur, this system currently consists of 1536 cores spread across 30 AMD Epyc Naples and 32 Intel Sandy Bridge compute nodes.

The AMD nodes include:

- 24 nodes with a single 32-core AMD Epyc 7551P @ 2.0 GHz and 256 GB RAM

- 4 nodes with the same CPUs but 512 GB RAM

- 2 nodes with dual 32-core AMD Epyc 7501 @ 2.0 GHz and 2 TB RAM

The Intel nodes feature dual 8-core Xeon E5-2660 @ 2.2 GHz and 64 or 128 GB RAM (8 nodes).

All nodes are interconnected via a 10 Gigabit Ethernet network and have access to three NFS filesystems totaling 100 TB.

Suitable for:

Shared-memory parallel jobs (OpenMP, Pthreads) or resource-intensive sequential workloads, especially large-memory jobs.

Resources

- Home directory (200 GB quota per user)

- Working directory

/workdir(400 GB,$WORKDIR) - Local working directory

/scratch($LOCALSCRATCH), dynamically defined in jobs - Nodes have internet access

- default queue* — Max 15 days

- hmem queue* — ≥ 64 GB/core, max 15 days

- Max 128 CPUs/user across all partitions

Access / Support

SSH to hercules2.ptci.unamur.be (port 22) via your university gateway using your login and an id_rsa.ceci key.

Support : ptci.support@unamur.be

Server SSH key fingerprints:

What’s this ?

RSA:

SHA256:LzByp8XBhpgy+2lB1DZcpieYUCSq8FEfLBLPm+WB8xg

ED25519:

SHA256:fHuc0Y+QuAZW2FrI9NXrfDt2CeDmVWD6wHeDW4I3ztw

ECDSA:

SHA256:SyLaaBe7CuO7Dpa6vJa0vbAUxnYSpl30xaJo5yBF//c